The Paper

Greybox Fuzzing for Concurrency Testing

Paper Link

https://abhikrc.com/pdf/ASPLOS24.pdf

Format

We start at 6:10, don't be late!

The discussion lasts for about 1 to 1.5 hours, depending upon the paper.

Read the paper (done before you arrive)

Introductions (name, and background)

First impressions (1-2 minutes this is what I thought)

Structured review (we move through the paper in order, everyone gets a chance to ask questions, offer comments, and raise concerns)

Free form discussion

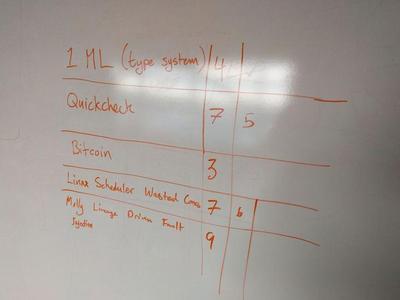

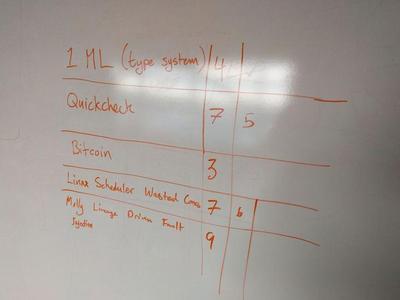

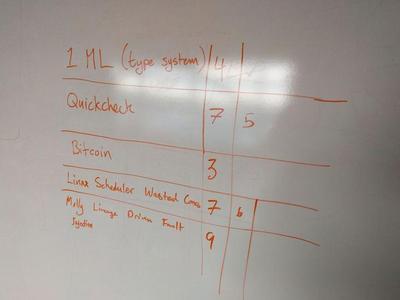

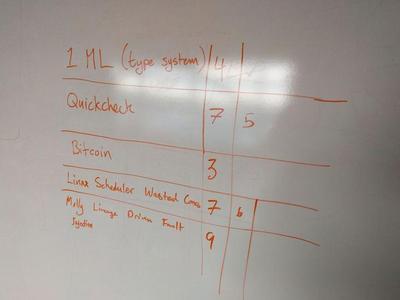

Nominate and vote on the next paper

Abstract

Uncovering bugs in concurrent programs is a challenging problem owing to the exponentially large search space of thread interleavings. Past approaches towards concurrency testing are either optimistic - relying on random sampling of these interleavings - or pessimistic - relying on systematic exploration of a reduced (bounded) search space. In this work, we suggest a fresh, pragmatic solution neither focused only on formal, systematic testing, nor solely on unguided sampling or stress-testing approaches. We employ a biased random search which guides exploration towards neighborhoods which will likely expose new behavior. As such it is thematically similar to greybox fuzz testing, which has proven to be an effective technique for finding bugs in sequential programs. To identify new behaviors in the domain of interleavings, we prune and navigate the search space using the "reads-from" relation. Our approach is significantly more efficient at finding bugs per schedule exercised than other state-of-the art concurrency testing tools and approaches. Experiments on widely used concurrency datasets also show that our greybox fuzzing inspired approach gives a strict improvement over a randomized baseline scheduling algorithm in practice via a more uniform exploration of the schedule space. We make our concurrency testing infrastructure "Reads-From Fuzzer" (RFF) available for experimentation and usage by the wider community to aid future research.